Why Data Center Thermal Management Is the Semiconductor Cycle’s Dirtiest Secret

The thesis in one sentence: The AI hardware arms race has quietly engineered a thermal physics problem that no amount of compute budget can simply buy its way out of — and the companies that own the cooling, the substrate, and the memory infrastructure are the real chokepoints of the next decade.

Table of Contents

Introduction: The Thesis & Why Now

The Market Opportunity

The Core Technology/Mechanism

The Key Bottleneck or Problem

The Players Racing to Solve It

The Economics of the Solution

7 Powers Moat Breakdown

The Fallback Scenario

Risks to the Thesis

Conclusion: Who Owns the Chokepoint

1. Introduction: The Thesis & Why Now

Here is the dirty little secret buried inside the semiconductor supercycle everyone is talking about: the chips are almost an afterthought now. The real war is being fought at the thermal, substrate, and memory layer — the unglamorous plumbing that decides whether a $30,000 GPU runs at full bore or thermal-throttles itself into mediocrity after 45 seconds.

This is not a thematic riff. It is a supply-chain physics problem with a very specific timeline, and the catalyst cluster that just landed in the past 30 days makes this the most compelling setup in semis since the HBM inflection of late 2023.

Let’s run the tape on what just happened:

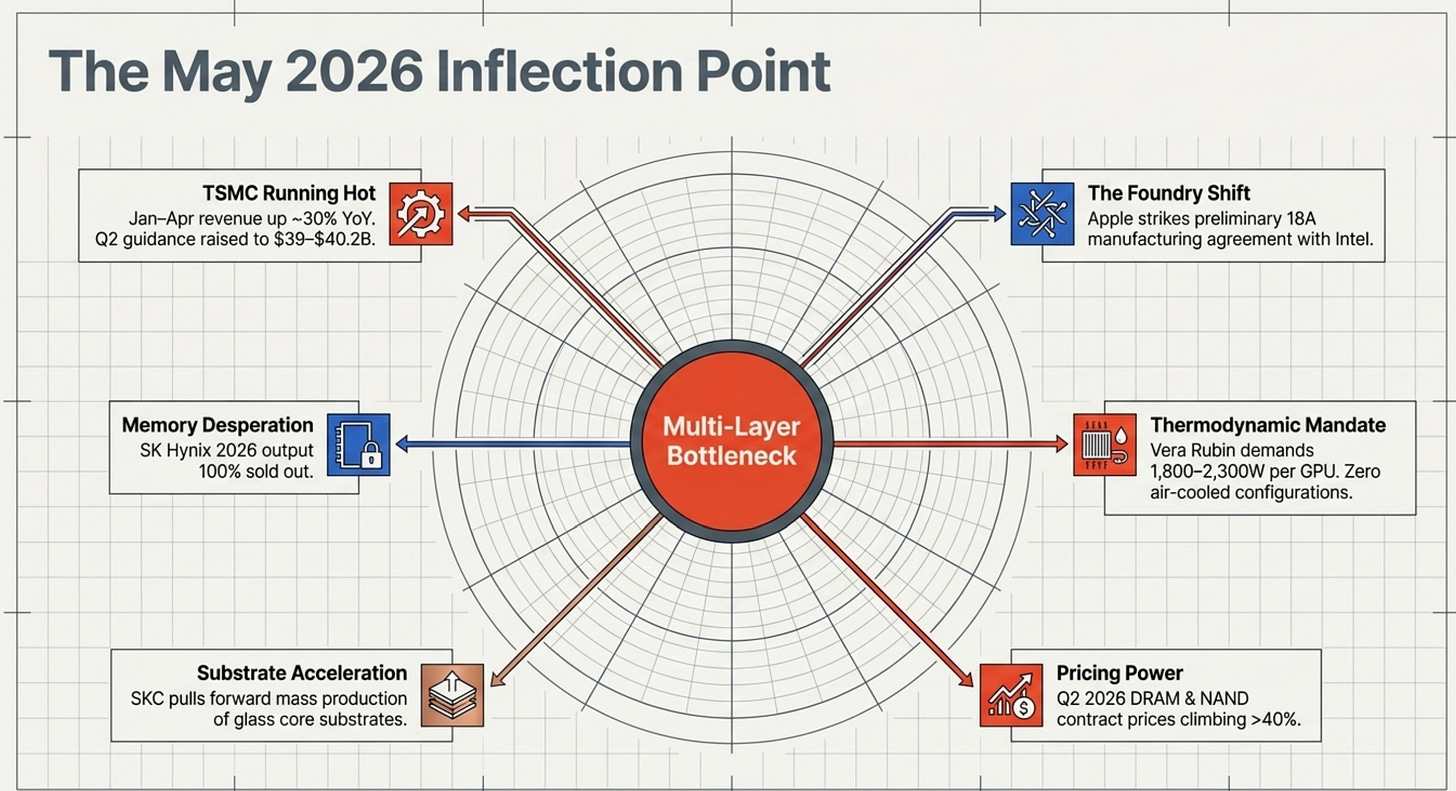

TSMC posted January–April 2026 revenue up ~30% year-over-year, with Q2 guidance of $39–$40.2B. The foundry is running hot — literally and figuratively.

Apple struck a preliminary chip manufacturing agreement with Intel, ending its decade-long TSMC monogamy and igniting Intel’s foundry revival narrative. Intel shares surged nearly 14% on the news. “Made in America” is no longer a slogan; it’s a supply-chain directive.

NVIDIA’s Vera Rubin platform — which ships H2 2026 — runs at 1,800–2,300W per GPU, compared to Blackwell’s ~1,000–1,200W. There is no air-cooled configuration. Zero. Every Vera Rubin deployment requires full liquid cooling. This is a binary forcing function on an entire industry.

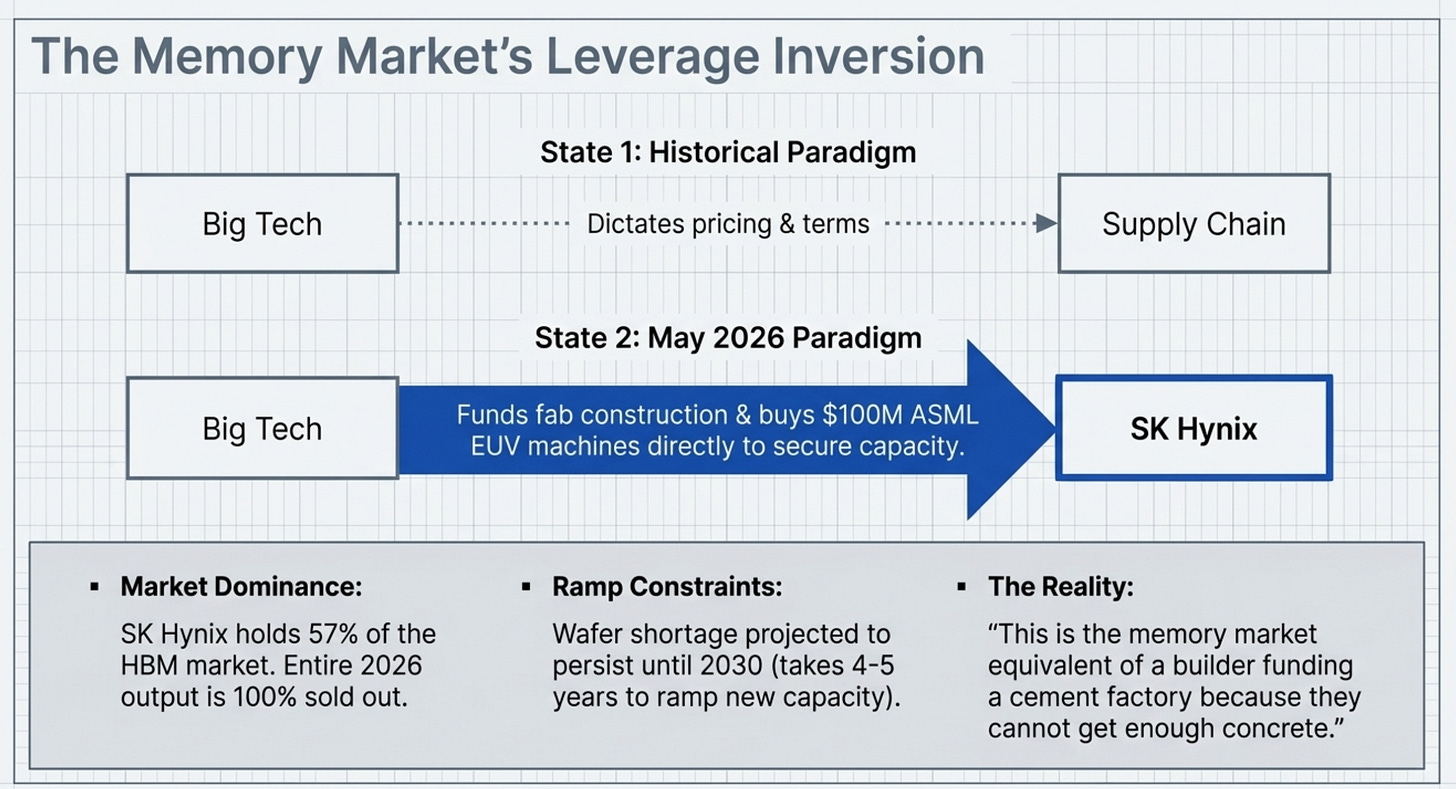

Big Tech (Alphabet, Meta, Microsoft, Nvidia, Google, Amazon) is now literally offering to buy SK Hynix’s EUV machines and fund entire production lines to secure HBM supply — because SK Hynix’s 2026 output is entirely sold out.

SKC is accelerating glass core substrate mass production ahead of schedule, with timelines pulled forward for U.S. clients. Glass substrates — long the “three years away” technology — are arriving faster than the industry expected.

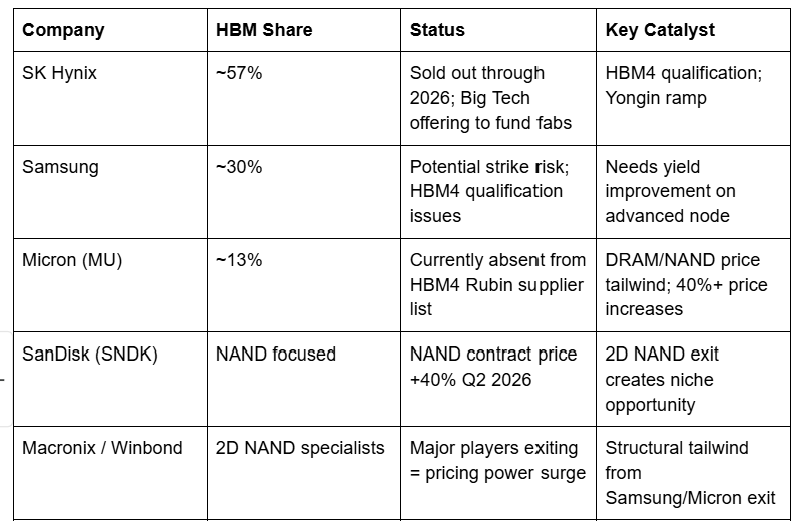

DRAM and NAND flash contract prices are reportedly set to climb more than 40% in Q2 2026, per Adata, with memory spot markets moving toward hourly pricing.

2D NAND is entering structural shortage as Samsung, Micron, and major players rotate capacity to 3D — creating a tailwind for niche specialists like Macronix and Winbond.

Each of these data points is a signal. Together, they form the clearest picture of a multi-layer bottleneck we’ve seen since the foundry crunch of 2021 — except this time, the bottleneck is not a one-dimensional fab capacity issue. It is thermal physics meeting substrate chemistry meeting memory architecture, all converging at the same inflection point.

The companies positioned at those intersections are, to use the technical term, going to go brrr.

2. The Market Opportunity

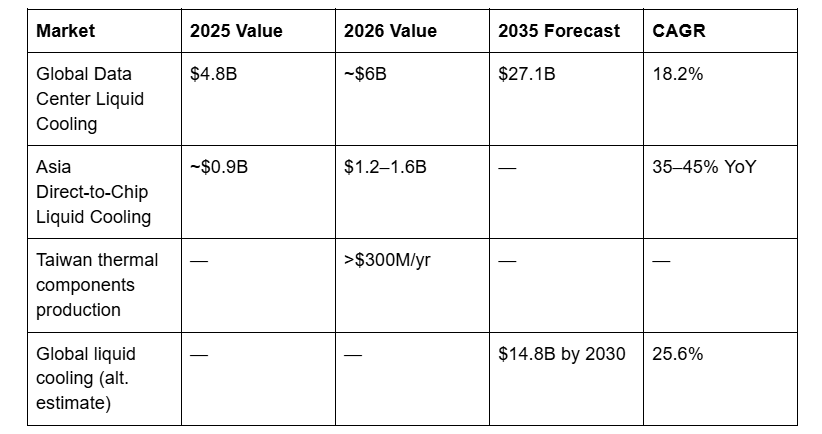

2a. Data Center Liquid Cooling

Let’s start with the most immediate catalyst: cooling. Air cooling is dead for AI. This is not a trend; it is a thermodynamics problem.

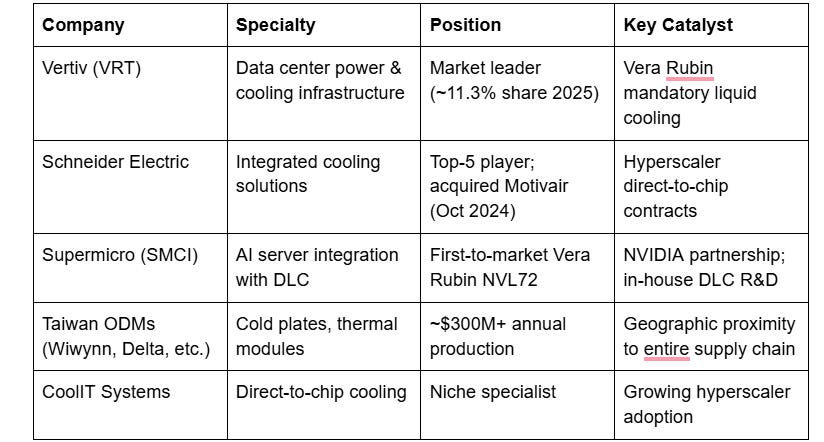

Rack densities that once averaged 15 kW are now hitting 80–120 kW in AI environments. NVIDIA’s Vera Rubin NVL576 configuration pushes an estimated 600 kW per rack. Physics dictates that air, which carries roughly 3,500 times less heat per unit volume than water, simply cannot handle this. The transition is binary and non-negotiable.

Industry projections suggest liquid cooling will be installed in 40% of data center sites by end of 2026, up from a fraction today. Taiwan has emerged as the dominant manufacturing hub for cold plates and thermal components, with production capacity exceeding $300M annually — a position that is structurally underpinned by proximity to TSMC, NVIDIA ODMs, and the broader supply chain ecosystem.

The thermal management market is not just growing. It is being dragged forward by a hardware cadence that is now essentially annual: GB200 NVL72 in 2024, GB300 NVL72 in 2025, Vera Rubin NVL72 in H2 2026, Feynman in 2028. Each generation roughly doubles power density.

2b. Glass Core Substrates

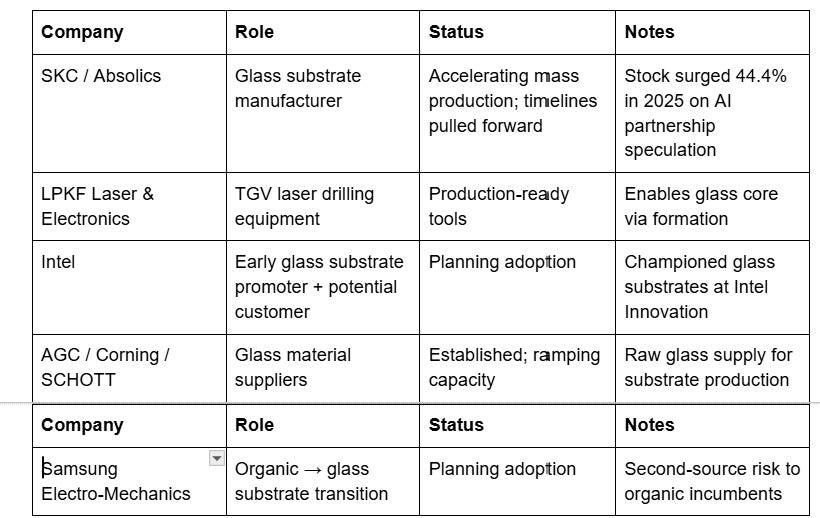

The glass substrate market is the sleeper in this thesis. For years, Intel, AMD, and Broadcom have been planning adoption, and the joke was always that glass substrates were perpetually three years away. That joke just stopped being funny.

The global glass substrate for semiconductors market represents what researchers call “one of the most significant material shifts in the packaging industry in decades.” The SKC-led Absolics subsidiary saw its parent’s stock surge 44.4% in early 2025 on reports of advanced negotiations with leading AI chip manufacturers. The 2026–2036 market covers seven segments from carrier glass to photonic integration tiles, with commercial deployment confirmed to be beginning now — not “possibly by 2026.”

2c. HBM & Memory

The HBM market is projected to grow at ~33% annually for the foreseeable future. SK Hynix holds 57% of the HBM market and approximately 32% of DRAM, and its entire 2026 output is spoken for. This is not a demand projection — it is a confirmed sold-out situation. The company’s wafer shortage, per SK Group Chairman Chey Tae-won, could persist until 2030, because it takes four to five years to ramp new wafer production.

DRAM ASPs surged over 60% in Q1 2026. NAND jumped over 70%. Adata projects DRAM and NAND flash contract prices will each climb more than 40% in Q2 2026. This is not cyclical. This is a structurally undersupplied market meeting structurally accelerating demand.

3. The Core Technology/Mechanism

Why Liquid Cooling Is Non-Negotiable

NVIDIA confirmed at GTC 2026 that it deliberately pushed Vera Rubin’s power target from 1,800W to 2,300W per GPU specifically to compete with AMD’s MI455X. This was a strategic choice to treat a higher power envelope as the price of staying ahead on per-GPU performance.

The consequence: air cooling mathematically cannot meet the PUE (Power Usage Effectiveness) requirements now mandated globally. Global energy compliance increasingly requires PUE under 1.15. Air cooling at Vera Rubin densities would produce a PUE around 1.5+ — a non-starter both economically and regulatory.

The Vera Rubin NVL72 rack uses warm-water, single-phase direct liquid cooling (DLC) with a 45°C supply temperature. This is an elegant engineering choice — by maintaining Blackwell’s 45°C cooling temperature, data centers can cool water with ambient air in many climates, eliminating chiller requirements and dramatically cutting water use. The system connects 72 Rubin GPUs and 36 Vera CPUs through NVLink 6 switches, delivering 3.6 exaFLOPS of NVFP4 inference performance per rack.

The redesigned internal liquid manifold and universal quick-disconnects support significantly higher flow rates than prior generations. Assembly that once took 1.5 hours for Blackwell now takes ~5 minutes with Vera Rubin. Modular design enables component servicing without draining the entire rack.

For data center operators, the practical effect is binary: facilities without direct-to-chip or immersion liquid cooling cannot host Rubin at all, regardless of available power capacity.

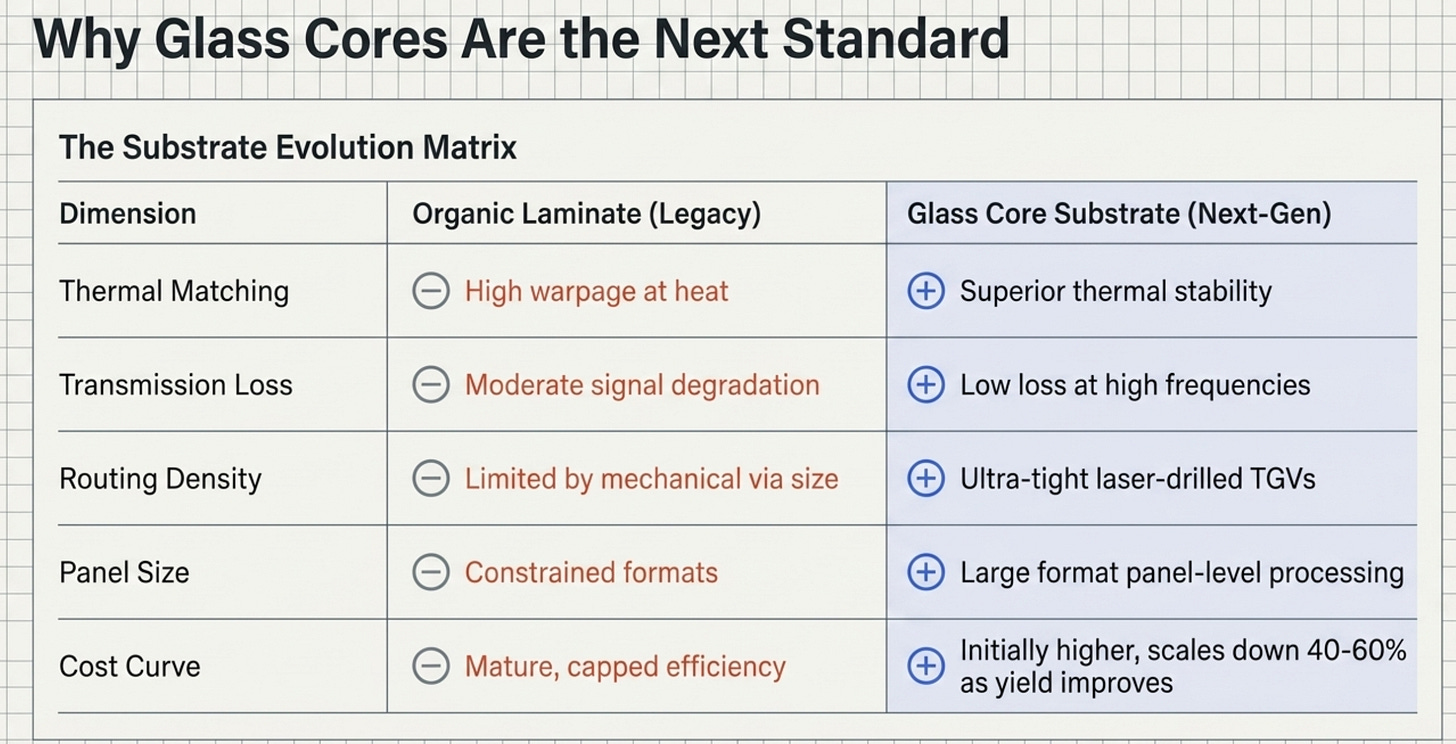

Why Glass Core Substrates Matter

Glass core substrates replace the organic laminate at the center of advanced chip packages. The advantages are substantial:

Superior thermal matching — reduces warpage at high temperatures

Low transmission losses — better signal integrity at high frequencies

Higher routing density — tighter through-glass vias vs. through-silicon vias

Larger panel sizes — enables cost reduction through panel-level processing

The manufacturing process involves Through-Glass Via (TGV) formation — essentially drilling microscopic holes through glass panels and metallizing them to create vertical electrical interconnects. This is precision laser work, which is where LPKF (laser drilling specialist) sits in the value chain.

Glass substrates are critical for the industry’s shift toward chiplets, 2.5D/3D-IC integration, and heterogeneous system architectures — the very architectures that AI accelerators demand.

Why HBM Is Irreplaceable

High Bandwidth Memory stacks DRAM dies vertically with through-silicon vias, delivering memory bandwidth that’s 5–10x what standard DDR5 can achieve. For AI inference and training, memory bandwidth is often the binding constraint — more important than raw compute. This is why every generation of NVIDIA GPU ships with more HBM, not less, and why the next-generation HBM4 and HBM4E represent existential supply concerns for the entire AI buildout.

4. The Key Bottleneck or Problem

The semiconductor supply chain has multiple simultaneous chokepoints right now. Understanding their interaction is key to understanding the opportunity.

Bottleneck 1: Thermal Infrastructure Lag

Data centers were not built for Vera Rubin. Retrofitting an air-cooled facility for Rubin-class density typically requires structural renovation, new plumbing, different power distribution, and months of downtime. The hardware refresh cycle is now annual; the infrastructure upgrade cycle is measured in years. The gap is widening.

Bottleneck 2: HBM Supply / Memory

SK Hynix has zero spare HBM capacity. Its 2026 output is entirely allocated, primarily to NVIDIA. The Yongin Y1 fab won’t reach meaningful scale until mid-2027 at the earliest. The M15X fab in Cheongju is ramping, targeting ~70,000 DRAM wafers per month by end of 2026 — still insufficient. Samsung faces a potential major strike. Micron is absent from HBM4 supplier qualification for NVIDIA’s next platform.

Big Tech is now doing something unprecedented: offering to fund SK Hynix’s fabs and purchase ASML EUV machines (at hundreds of millions of dollars per unit) in exchange for supply guarantees. This is the memory market equivalent of a builder funding a cement factory because they can’t get enough concrete. It tells you everything about supply severity.

Bottleneck 3: Substrate Transition

The industry is mid-migration from organic substrates to glass. The transition cannot be rushed — yield improvement takes time, ecosystem maturation requires multiple customers simultaneously ramping, and the precision manufacturing (TGV formation, metallization, panel-level processing) requires specialized equipment that has long lead times.

Bottleneck 4: Foundry Concentration

TSMC processes roughly 70% of the world’s leading-edge logic. Apple’s Apple-Silicon migration to Intel’s 18A node represents the first meaningful diversification from that monoculture in years. Intel’s Chandler, Arizona plant producing chips on 18A — meant to rival TSMC’s 2nm node — is the only alternative for leading-edge logic on U.S. soil. The geopolitical risk premium on Taiwan-concentration is repricing in real time.

Bottleneck 5: 2D NAND Shortage

As Samsung, Micron, and other major players exit 2D NAND to focus on 3D NAND and HBM, structural supply shortfalls are emerging for the niche applications that still require 2D NAND. Macronix and Winbond — the specialists who stayed — find themselves in an increasingly favorable pricing environment.

5. The Players Racing to Solve It

Competitive Landscape: Thermal Management

Competitive Landscape: Glass Core Substrates

Competitive Landscape: HBM & Memory

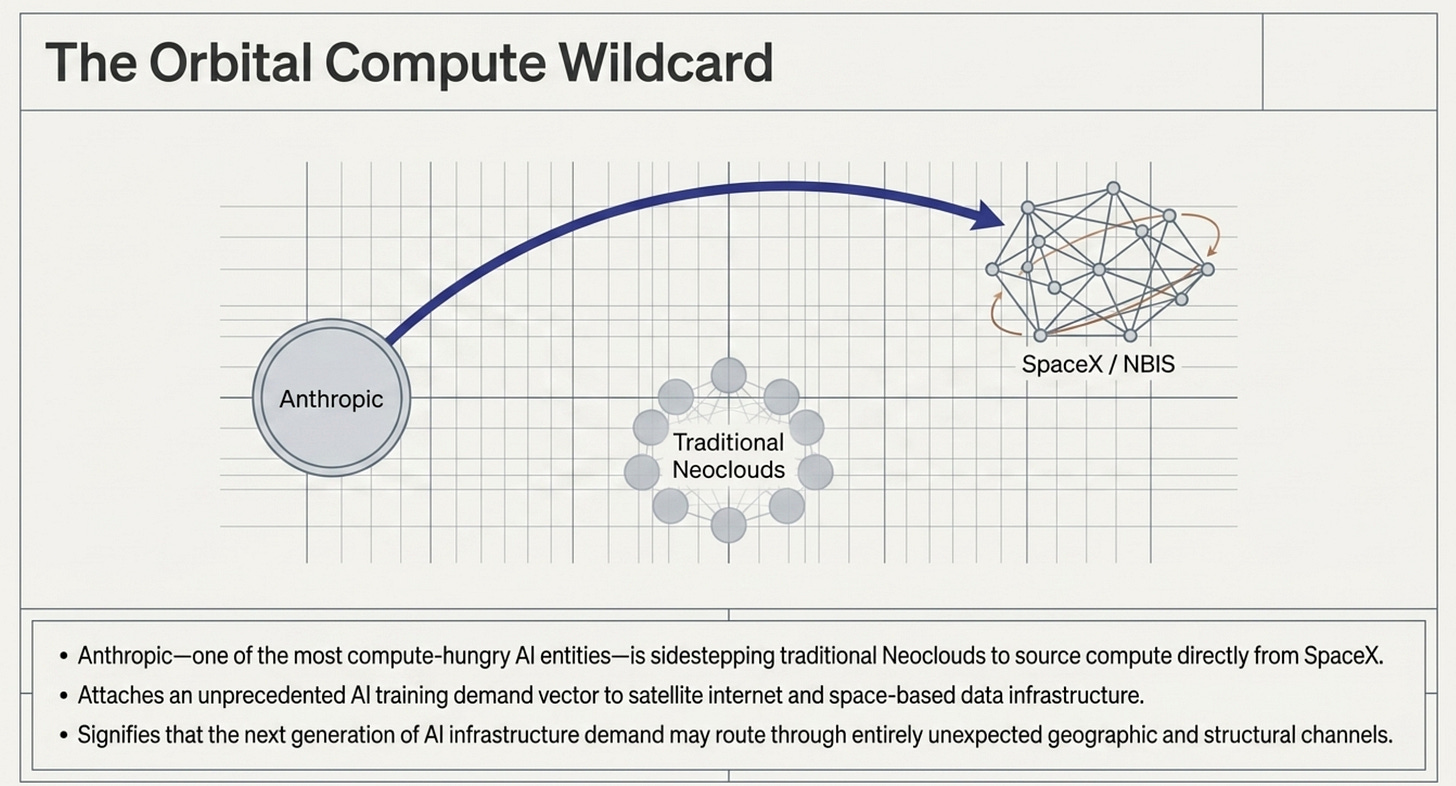

The Wild Card: Nebulon International (NBIS) and the Anthropic/SpaceX Compute Deal

The source material flags a fascinating development: Anthropic — one of the most compute-hungry AI companies on the planet — sidestepped the established Neoclouds and went directly to SpaceX for compute. The implications for compute demand concentration are significant. SpaceX’s Starship-enabled satellite internet and data infrastructure thesis now has an AI training demand vector attached to it. $NBIS and adjacent compute infrastructure players gain a new demand narrative from this development.

6. The Economics of the Solution

Liquid Cooling Economics

The economics of the liquid cooling transition are compelling from both directions of the trade:

Cost to NOT transition: Vera Rubin facilities that aren’t liquid-cooled get zero Rubin deployments. At $30,000–$40,000+ per GPU, a single NVL72 rack represents $2M+ in hardware sitting idle without compliant infrastructure. The opportunity cost of non-transition dwarfs the capex of retrofitting.

Cost of transition: Retrofitting a legacy air-cooled facility runs $1–5M per megawatt of capacity, depending on structural requirements. This sounds expensive until you compare it to the $400M+ average cost of a new hyperscale data center. As a percentage of total asset value, thermal upgrades are the smallest line item with the biggest productivity impact.

Margin for cooling suppliers: Direct-to-chip cold plates command 40–60% gross margins in current constrained conditions. CDUs (Coolant Distribution Units) carry 35–50% margins. Vertiv, which led with 11.3% market share in 2025, is now seeing ASP expansion alongside volume growth — a rare combination.

Glass Substrate Economics

The cost structure of glass core substrates is currently unfavorable vs. organic — a fact bears love to cite. Glass is approximately 2–3x more expensive per substrate on a like-for-like basis. However:

Yield improves dramatically with scale — early runs are inefficient; mature processes bring costs down 40–60%

Performance differential widens at advanced nodes — organic substrate warpage and signal integrity limitations become economically intolerable above certain frequencies

The relevant comparison is total package cost, not substrate-in-isolation — glass enables chiplet architectures that would require more expensive alternatives otherwise

LPKF’s laser drilling equipment is the enabling technology for TGV formation. As glass substrate ramp accelerates, LPKF benefits from both the initial equipment capex cycle and the consumables/service revenue on installed base.

Memory Economics

DRAM ASPs surging 60%+ in Q1 2026, with NAND up 70% and another 40%+ guided for Q2. The last time memory had a pricing cycle this strong was 2017–2018 — and that produced extraordinary earnings leverage for the memory trio. The current cycle has an unusual characteristic: demand is structurally driven by AI, not cyclically by consumer electronics. The AI infrastructure buildout doesn’t pause for inventory digestion. It accelerates.

SK Hynix’s HBM margins are structurally superior to commodity DRAM — the product is differentiated, switching costs are high (qualification processes are long), and customer willingness to pay is essentially unlimited when the alternative is losing the AI race.

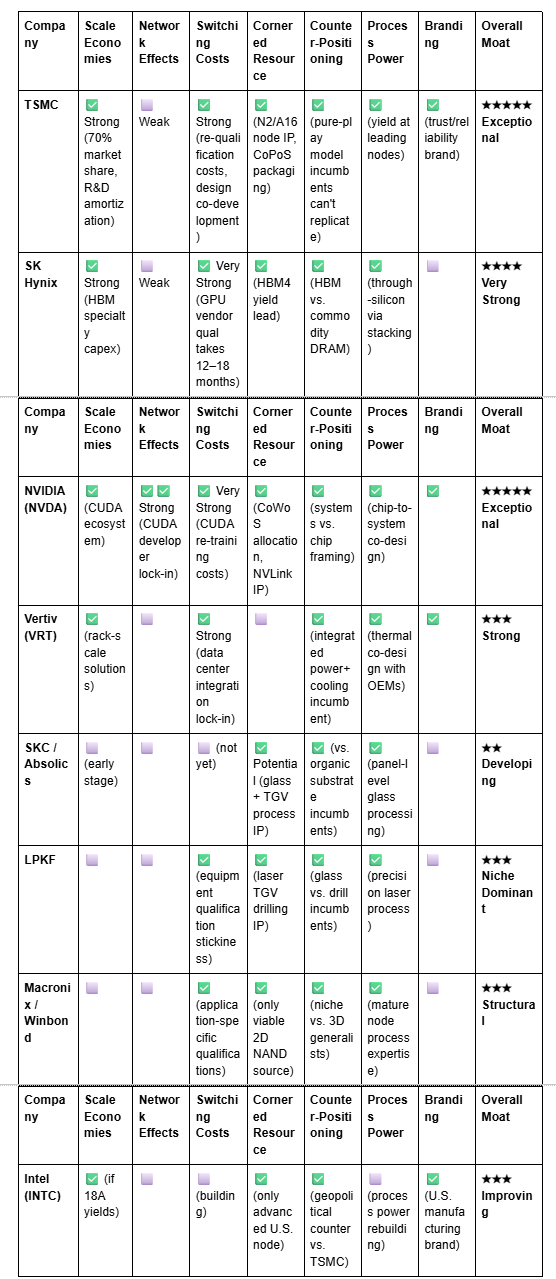

7. The 7 Powers Moat Breakdown

Hamilton Helmer’s 7 Powers framework — applied to the key players in this ecosystem.

Key: ✅ = present strength, ⬜ = absent or weak

The most important observation from this table: TSMC and NVIDIA have exceptional moats across almost every dimension. The interesting asymmetric opportunities lie in the players currently developing moats — SKC/Absolics building process power in glass, LPKF cornering the TGV equipment niche, and Intel rebuilding its counter-positioning against TSMC’s Taiwan concentration with explicit government backing.

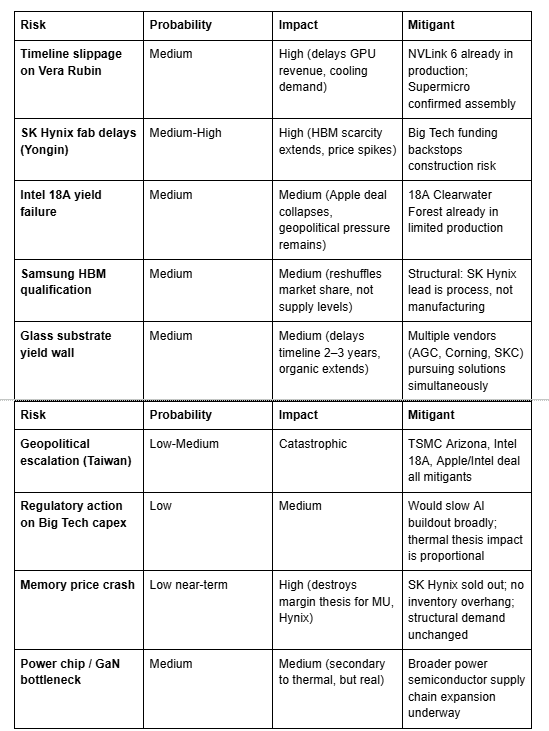

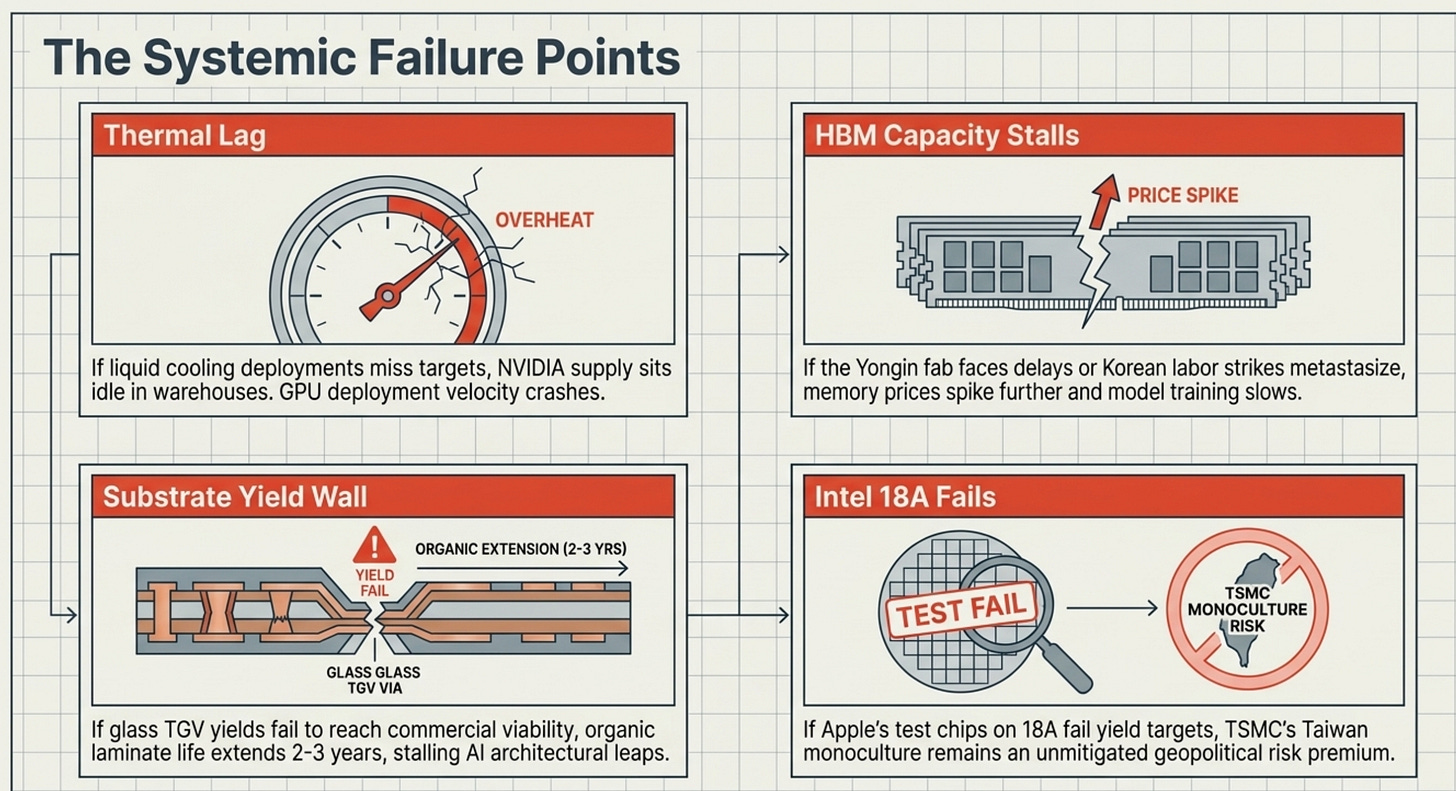

8. The Fallback Scenario

Every thesis needs a bear case. What happens if the bottlenecks aren’t solved in time?

Thermal Bottleneck Doesn’t Clear: If liquid cooling infrastructure doesn’t deploy fast enough to absorb Vera Rubin, the consequence is straightforward: GPU deployment velocity slows. NVIDIA’s revenue is gated not by production but by customers’ ability to install and operate the hardware. This creates a situation where NVIDIA’s supply is sitting in warehouses while customers retrofit data centers — a delay, not a cancellation, but painful for near-term revenue recognition and a gift to anyone short the hyperscaler capex narrative.

SK Hynix Capacity Doesn’t Scale: If the Yongin fab faces construction delays, yield challenges on HBM4, or the threatened Samsung strike metastasizes into an industry-wide Korean labor disruption, the HBM bottleneck could tighten further. Big Tech’s unprecedented offers to fund EUV machines signals exactly this fear. The fallback for AI companies in this scenario: slower model training cycles, higher inference costs, and potential competitive reordering toward whoever has secured the best long-term memory supply agreements.

Glass Substrates Hit Yield Wall: The glass substrate story has been “almost there” before. If yield improvement stalls at commercially nonviable levels, organic substrates extend their life another 2–3 years. This would be negative for SKC/Absolics and LPKF, neutral to slightly positive for the organic substrate incumbents (Ibiden, Shinko, AT&S), and broadly a delay rather than a cancellation.

Intel 18A Disappoints: The Apple/Intel deal is preliminary. If Intel’s 18A node fails to hit the yield and performance targets required for Apple’s A-series chips, the deal evaporates, Intel shares give back their gains, and TSMC’s monopoly on leading-edge logic remains intact. Given Intel’s recent track record, this is a non-trivial probability.

Macro Demand Destruction: A severe global recession compresses enterprise IT budgets. AI capex, while more resilient than consumer electronics, is not immune to a 30%+ GDP shock. The Mag7’s ability to maintain $200B+ annual capex is contingent on revenue growth; if that growth stalls, the memory and cooling buildout moderates.

9. Risks to the Thesis

The geopolitical risk deserves special mention. The Apple/Intel deal, Intel’s $100B+ U.S. capex commitment, and the U.S. government’s conversion of CHIPS Act grants into an ~10% Intel equity stake are all de-risking tools for the Taiwan concentration scenario. But they’re also indicators that the policy community views the risk as real and material. The Taiwan premium on TSMC-dependent supply chains should be expected to persist, and any player offering a credible alternative will carry a valuation premium.

10. Conclusion: Who Owns the Chokepoint

The semiconductor supercycle has matured past the “build more fabs” phase into a multi-dimensional infrastructure war. The companies that will compound most from here are not necessarily those making the most advanced chip — they’re the ones controlling the bottlenecks that advanced chips can’t function without.

The ranking of chokepoints, by near-term investable urgency:

🔴 Tier 1 — Immediate, Structural, Irreplaceable:

SK Hynix: Owns HBM. Sold out. Big Tech is begging to fund their expansion. The leverage inversion in this market — from tech giants dictating terms to memory makers offering to fund their factories — is the single most bullish signal in the entire semiconductor ecosystem right now.

TSMC: CoPoS packaging technology (the thing that the source material flagged with “VisEra/others might go brrr”) — TSMC’s advanced packaging is becoming as important as its leading-edge nodes. CoPoS (Chip on Wafer on Substrate) is a competitive moat that packaging specialists like VisEra are directly adjacent to. Revenue up 30% YTD, Q2 guidance $39–40.2B, 66%+ gross margins.

🟡 Tier 2 — High-Conviction, 12–24 Month Catalyst:

Glass Substrate ecosystem (SKC/Absolics, LPKF): Timelines accelerated ahead of original plans. The pull-forward of glass core mass production for U.S. clients is the tell. When the customer base starts demanding earlier delivery, you don’t argue — you accelerate. LPKF’s equipment is the gating technology for glass TGV formation; this is a classic equipment bottleneck play.

Liquid Cooling infrastructure (Vertiv, Supermicro, Taiwan ODMs): Vera Rubin is a binary forcing function. No liquid cooling = no Vera Rubin deployment. The installed base conversion opportunity is enormous, and the Taiwan thermal management ecosystem is structurally positioned to supply it.

Micron (MU) + NAND specialists: 40%+ Q2 contract price increases. Adata said it. History suggests memory pricing cycles overshoot on the upside. Macronix and Winbond get the structural benefit of major players exiting 2D NAND.

🟢 Tier 3 — Optionality, Monitor Closely:

Intel (INTC): The Apple deal is a validation catalyst. 18A must deliver. Intel shares +200% YTD, but the company is executing against a historically punishing failure record. The optionality is real; the execution risk is also real.

Compute infrastructure adjacent to Anthropic/SpaceX thesis (NBIS): The decision to sidestep Neoclouds and go directly to SpaceX for compute is a fascinating signal about how the next generation of AI infrastructure demand will be sourced.

What To Watch — The Key Data Points

SK Hynix HBM4 yield reports — any slip here reshuffles the memory market immediately

Vera Rubin NVL72 first hyperscaler deployments — confirms cooling infrastructure is ready at scale

SKC glass substrate production updates for U.S. clients — the “ahead of schedule” claim needs a follow-up data point

Intel 18A Apple chip production timeline — Q3 2026 should bring clarity on first article yields

DRAM/NAND contract prices — quarterly pricing reports from DRAMeXchange/TrendForce

TSMC Q2 2026 earnings — CoPoS/advanced packaging revenue disclosure

Big Tech capex guidance for H2 2026 — the multiplier on every thesis in this piece

The heat, quite literally, is on. Physics doesn’t negotiate, timelines are being pulled forward, and the companies that own the thermal, substrate, and memory infrastructure are writing the next chapter of the semiconductor supercycle. The AI buildout didn’t slow down because it got expensive — it accelerated. The bottlenecks just shifted from where most people are looking.

⚠️ Disclaimer: This piece is for informational and educational purposes only. Nothing in this analysis constitutes financial, investment, or legal advice. All information is sourced from public disclosures, news reports, and market research as of May 2026. Past performance is not indicative of future results. All investments carry risk, including the potential loss of principal. Do your own research (DYOR) before making any investment decisions. The author may or may not hold positions in securities mentioned.